AI Prompt Learning Center

Bias in AI Prompts: Understand, Detect and Prevent AI Bias

Up to 85% of AI systems exhibit some form of bias, affecting areas like hiring and healthcare. As AI becomes more embedded in daily life, understanding how bias emerges in prompts is crucial for ensuring fairness and accuracy in AI-generated results..

So, how does bias in AI prompts happen, and what can you do to prevent it? Let’s have a closer look.

1. What is Bias in AI Prompts?

When interacting with AI systems, have you ever noticed that some responses feel skewed, incomplete, or even unfair? This is often the result of bias in AI prompts. But what exactly does that mean, and why does it matter to you?

Bias in AI prompts occurs when:

- The language of the prompt influences the AI to produce unbalanced or partial responses.

- Data used to train the AI contains historical biases or reflects societal stereotypes.

- The design of the prompt embeds human assumptions that affect the AI’s output.

Why You Should Care

Bias in AI affects both the fairness and accuracy of the information you receive. Here’s why you should be concerned:

- Misinformation: Biased outputs can spread false or incomplete information.

- Reinforced stereotypes: AI can unintentionally amplify societal biases.

- Exclusion of perspectives: Important viewpoints or groups may be ignored.

For businesses, this could lead to decisions based on flawed data, which can affect hiring, marketing, and customer service. For individuals, it could mean perpetuating existing inequalities and limiting opportunities.

Example:

- Imagine you use AI to help write a job description. If the prompt contains gender-biased language (e.g., “strong leadership” associated with males), the AI might suggest phrasing that discourages certain groups from applying. This seemingly small issue can have a broad impact on diversity and inclusion in the workplace.

Why Addressing Bias Matters

Understanding and addressing bias in AI prompts ensures that:

- The outputs you receive are accurate, fair, and inclusive.

- You use AI tools responsibly and avoid unintentionally contributing to biased outcomes.

Knowing about biases, you can take proactive steps to reduce their impact and promote fairness in AI systems.

How Training Data Bias Contributes to AI Prompt Bias

Bias in AI prompts happens for two main reasons: biased training data and human bias in the design of prompts. These factors shape how AI generates its responses, often without users realizing it. Let’s break them down to understand how this works.

How Sampling Bias Affects AI Training Data

Sampling bias occurs when AI systems are trained on datasets that do not accurately represent the entire population. This can results in skewed outputs, as certain groups may be overrepresented while others are underrepresented.

- Example: An AI model trained primarily on data from one demographic might fail to generalize well to other groups, leading to biased results in applications like healthcare or hiring.

2. How Algorithmic Bias and Training Data Bias Affect AI Outputs

AI systems rely on large datasets to learn how to generate responses. However, these datasets are often pulled from historical records or the internet, which can contain biases reflecting societal inequalities. When AI is trained on such data, it may unknowingly perpetuate those biases in its outputs.

How this happens:

- Data that reflects gender, racial, or social biases feeds into AI training.

- AI absorbs these biases and reproduces them in responses.

- Biased datasets reinforce stereotypes (e.g., assuming certain jobs are gender-specific).

Example: An AI trained on older job descriptions may suggest that leadership roles require traditionally masculine traits, sidelining qualities like empathy or collaboration.

Human Bias in Prompt Design: How Our Language Shapes AI Outputs

Even if the data is balanced, the prompts we design can introduce bias. The way you phrase a question, the words you choose, and the assumptions you include can steer AI responses and AI discrimination in particular directions, often reinforcing societal stereotypes or personal biases.

How this happens:

- Prompts reflect our unconscious assumptions.

- Vague or unbalanced language leads to biased responses.

- AI tries to match the tone and perspective of the prompt, which can skew results.

Example: A prompt like “What makes a great CEO?” might generate traits like assertiveness or competitiveness, neglecting other important qualities like emotional intelligence, which are often undervalued in leadership discussions.

Case Study: Biased Outputs in Generative AI Tools

A study by MIT Media Lab found that when AI tools were asked to create resumes, they often produced different versions for men and women, despite the same role being applied for. This happened because the AI was trained on biased data that associated certain career paths with specific genders. Even though the prompt was neutral, the AI’s output reflected these embedded biases.

Case Study: Criminal Justice AI Bias in Predictive Policing

Predictive policing tools like PredPol reinforce racial biases by relying on historical crime data. These tools often over-target minority communities, as they reflect biased policing practices from the past. A 2016 ProPublica study found that the COMPAS system, used to predict recidivism, disproportionately labeled Black defendants as high risk, leading to unfair sentencing and parole decisions

Case Study: Healthcare AI Bias in Treatment Prioritization

In healthcare, an AI tool used to prioritize care systematically favored white patients over Black patients. This occurred because the algorithm was trained on healthcare costs, not health outcomes. A 2019 study published in Science revealed that Black patients received less critical care than equally sick white patients due to this bias.

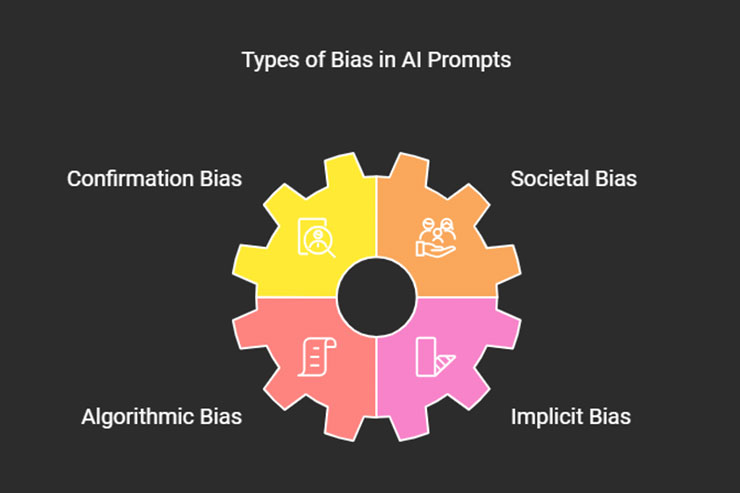

3. What Are the Different Types of Bias in AI Prompts?

When using AI, it’s essential to recognize that biases can emerge in various forms, often without you even realizing it. Understanding these different types of bias will help you be more mindful when interacting with AI and designing prompts. Here are some common forms of bias you should watch for:

1. Confirmation Bias: Reinforcing Existing Beliefs

Confirmation bias occurs when AI tends to reinforce the expectations or beliefs you had when designing the prompt. Essentially, the AI provides answers that confirm what you were already thinking, rather than offering new or balanced information.

How it happens:

- Prompts are framed in a way that suggests a specific answer.

- AI tailors its response to match the assumptions within the prompt.

Example: If you prompt, “Why are electric cars better than gas cars?” the AI will likely list reasons supporting electric vehicles, reinforcing the idea that they are superior without offering counterpoints.

2. Societal Bias

Societal bias reflects stereotypes, prejudices, or inequalities present in society. When AI is trained on biased societal data, it can reproduce and even amplify these biases in its outputs.

How it happens:

- Data used to train AI reflects historical or cultural biases.

- AI replicates societal stereotypes in its responses.

Example: If AI is asked to suggest career options for women and men, it might propose traditional “female” roles like nursing for women and technical roles for men, reflecting societal expectations.

3. Algorithmic Bias

Algorithmic bias results from how AI systems are designed and trained. Even if the data itself is neutral, the way algorithms process and prioritize information can introduce bias, affecting the algorithm fairness of the results.

How it happens:

- Algorithms are designed with assumptions that may skew results.

- The way AI ranks or sorts data can unintentionally favor certain groups or outcomes.

Example: An AI used in hiring might favor resumes from candidates with a particular educational background or gender, not because of the data itself, but due to the algorithm’s underlying preferences or training model.

4. Implicit Bias

Implicit bias refers to biases that are not consciously introduced into the system but are present nonetheless. These biases often arise from the language used in prompts or subtle cultural assumptions baked into the data.

How it happens:

- Unconscious cultural or linguistic assumptions shape the prompts.

- The AI outputs reflect hidden biases that even the user or developer might not be aware of.

Example: When prompted to describe a “professional hairstyle,” AI might suggest images or descriptions that primarily reflect Western beauty standards, excluding diverse hair types.

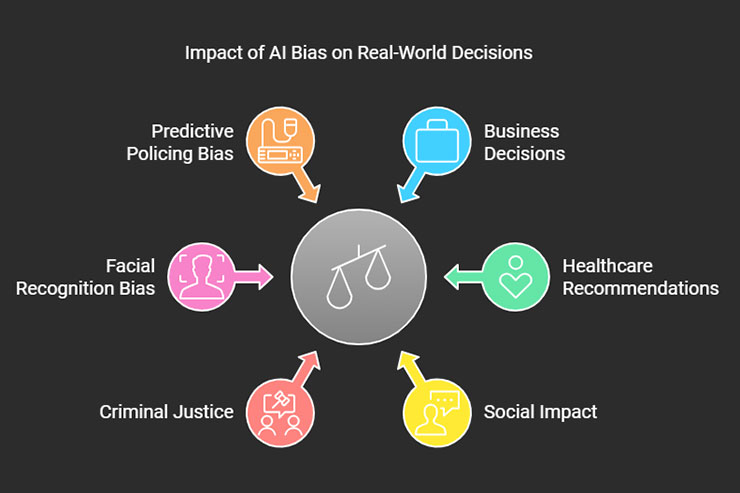

4. How Does Bias in AI Prompts Affect Real-World Decisions?

Bias in AI prompts can have far-reaching consequences in real-world decision-making, influencing everything from hiring processes to healthcare recommendations. These biases can lead to unfair outcomes, perpetuate inequalities, and affect critical decisions in both personal and professional contexts.

Here’s how AI bias can manifest in everyday life:

Business Decisions

AI tools are often used to make decisions in hiring, marketing, and customer service. If the prompts and data are biased, these decisions can reinforce stereotypes and exclude qualified candidates or customers.

- Example: A company using AI to screen resumes might inadvertently prioritize male applicants for leadership positions due to biased training data, reducing diversity in hiring.

Healthcare Recommendations

AI systems in healthcare rely on data to suggest treatment options or diagnose conditions. If the data is biased, it could lead to recommendations that are less effective or even harmful to certain groups.

- Example: An AI tool trained on data from predominantly white populations may under-diagnose health issues in people of color because the symptoms present differently.

Social Impact

AI bias can perpetuate societal inequalities by reinforcing harmful stereotypes or excluding certain groups from important conversations or opportunities.

- Example: An AI chatbot trained on biased social media data may generate responses that reflect racial or gender stereotypes, influencing public discourse negatively.

Criminal Justice

Bias in AI used in criminal justice systems can lead to unjust outcomes, such as biased sentencing or parole decisions.

- Example: AI tools used to assess the likelihood of re-offending may disproportionately assign higher risk scores to minority groups based on biased historical data, influencing parole or sentencing decisions.

Facial Recognition Bias

Facial recognition bias arises when AI systems, often trained on lighter-skinned or male-dominated datasets, perform poorly on women and people of color. This leads to misidentification, unfair targeting, and reduced accuracy, especially in law enforcement applications..

- Example: Studies have shown that facial recognition systems exhibit higher error rates for people with darker skin tones, resulting in significant concerns about privacy, discrimination, and justice

Predictive Policing Bias

Predictive policing bias arises when AI systems used by law enforcement rely on biased historical data, leading to disproportionately higher targeting of minority communities.

- Example: These systems often reinforce racial profiling by predicting crime in areas with a history of over-policing, rather than providing objective risk assessments. This can perpetuate unjust practices and deepen societal inequalities

5. How Can You Detect Bias in AI Prompts?

Detecting bias in AI prompts is crucial for ensuring fairness and accuracy in AI-generated outputs. While AI systems are designed to assist, they are only as neutral as the data and prompts provided. Here are some tools and strategies to help you identify bias in AI outputs:

1. Keyword and Language Sensitivity

Carefully examine the language used in your prompts. Biased language or assumptions can lead to skewed outputs, so it’s important to phrase your questions neutrally.

- Tip: Avoid leading questions or prompts that assume a particular answer. For instance, instead of asking, “Why are women less suited for leadership roles?” try, “What are the key qualities of effective leaders?”

2. Bias Detection Tools

Several tools have been developed to help identify and flag bias in AI-generated outputs:

- IBM’s AI Fairness 360: This tool helps assess bias in AI systems and offers techniques for mitigating bias.

- Google’s What-If Tool: A visualization tool that allows you to explore how changes in data or model parameters affect outcomes, helping you spot biased patterns.

3. Examine Outputs for Stereotypes

Pay close attention to the outputs generated by AI. Are they reinforcing stereotypes or showing favoritism to certain groups? Regularly reviewing the results with a critical eye can help you catch biased patterns.

- Example: If your AI consistently suggests certain careers for men and others for women, that’s a sign of societal bias in the system.

4. Use Diverse and Representative Data

Ensure that the data feeding into your AI system is diverse and represents different groups fairly. Biased data leads to biased outputs.

- Tip: If possible, review the datasets used to train the AI and ensure that they include a wide range of perspectives, cultures, and backgrounds.

5. Perform A/B Testing on Prompts

Try testing different versions of your prompts to see how the AI responds. By comparing outputs from multiple prompts, you can detect if certain wording or phrasing introduces bias.

- Example: Compare responses to prompts like “What makes a great leader?” versus “What qualities do successful leaders possess?” to ensure you’re getting a balanced view.

6. How Can You Avoid Bias in AI Prompts?

Creating fair and unbiased AI prompts is essential to ensuring that AI systems generate responses that are accurate and inclusive. Fortunately, there are several strategies you can follow to reduce or remove bias from your prompts:

1. Use Neutral Language in Your Prompts

The way you phrase your prompts can significantly influence AI-generated outputs. Avoid assumptions or leading questions that might steer the AI toward biased responses.

- Tip: Frame your questions in neutral, objective terms. Instead of asking, “Why are men better suited for technical roles?” try, “What skills are important for success in technical roles?”

2. Be Specific and Clear

Vague or broad prompts can lead to ambiguous responses that may inadvertently include biased assumptions. Be specific in what you’re asking to guide the AI toward balanced answers.

- Tip: Instead of “What are good jobs for women?” ask, “What are high-demand careers in 2024 for people with diverse skills and backgrounds?”

3. Encourage Diverse Perspectives

When designing prompts, actively seek diverse perspectives and viewpoints. This ensures that the AI considers a broader range of responses.

- Tip: Ask open-ended questions that invite multiple perspectives, such as, “What are the qualities of successful leaders across different industries and cultures?”

4. Review and Refine Prompts Regularly

AI prompts should be reviewed periodically to ensure that they are free from unintended biases. Prompt engineers should be proactive in refining prompts over time.

- Tip: Test different versions of your prompts and compare the results to ensure they’re producing fair outcomes.

5. Collaborate with Diverse Teams

Working with a diverse team can help identify biases that you may not notice. Multiple perspectives during the prompt design process can result in more balanced and inclusive outputs.

- Tip: Involve team members from different backgrounds when drafting and reviewing AI prompts to spot potential biases.

6. Use Bias-Detection Tools

Incorporate tools designed to detect and mitigate bias in AI-generated content. These tools can provide real-time feedback on your prompts and highlight areas where bias might be present.

- Tip: Use tools like IBM’s AI Fairness 360 or Google’s What-If Tool to monitor the fairness of AI outputs and adjust your prompts accordingly.

7. Cognitive Bias in AI

Cognitive bias in AI happens when human biases unintentionally shape how AI models are built. These biases, stemming from developers’ experiences or preferences, can influence data selection or how results are interpreted, leading to skewed outputs.

- Example: If a developer favors certain cultural norms or demographics, the AI model may replicate those biases, skewing outputs in ways that reflect these preferences rather than objective data.

7. What Are the Ethical Implications of Bias in AI Prompts?

Bias in AI prompts is not just a technical issue—it’s an ethical one. When working with AI, you have a responsibility to ensure that your prompts are designed in ways that prevent harm and promote fairness. Here are some key ethical AI development considerations to keep in mind:

1. The Impact of Biased AI on Marginalized Groups

AI bias can disproportionately affect marginalized or underrepresented groups, reinforcing existing inequalities. As an AI user or prompt designer, it’s crucial to recognize the social implications of biased outputs.

- Example: A biased AI tool used in recruitment may systematically favor certain demographics over others, limiting opportunities for marginalized groups.

2. The Responsibility of Developers and Engineers

Prompt engineers and AI developers play a critical role in preventing bias. Ethically, they must ensure that the systems they build are fair, transparent, and inclusive. Bias in AI can lead to real-world consequences, making ethical considerations essential in the design process.

- Tip: Include ethical reviews and testing at every stage of the AI development process to catch potential biases early.

3. Transparency in AI Decision-Making

One ethical challenge is the “black box” nature of AI systems, where users don’t always know how decisions are being made. Ensuring transparency is essential to build trust and accountability in AI.

- Tip: Be open about how AI models are trained, what data they use, and how decisions are made. This transparency allows users to assess the potential for bias and seek corrections.

4. Fairness in AI Use

AI systems should strive to promote fairness by being neutral and not favoring any particular group or outcome. Designers and users alike have an ethical responsibility to use AI tools in ways that are fair and just.

- Example: In healthcare, an AI system should provide treatment recommendations equally, regardless of a patient’s race or socio-economic background.

5. Informed Consent and User Awareness

Ethically, users should be informed when they are interacting with AI systems and understand how the AI operates. This includes knowing how the system processes prompts and what data influences the results.

- Tip: Always disclose when AI is being used, especially in scenarios where decisions might have significant personal or social impacts, such as hiring, education, or healthcare.

6. Continuous Monitoring and Ethical Oversight

AI systems are not static—they evolve over time as they learn from new data. This means ongoing monitoring is necessary to ensure that bias doesn’t creep in as models adapt to new information.

- Tip: Regularly audit AI systems for bias and ethical issues, and make improvements as needed to maintain fairness and inclusivity.

Understanding the ethical implications of bias in AI prompts helps you create more responsible AI systems. By being mindful of fairness, transparency, and social impact, you can ensure that the AI tools you design or use contribute positively to society.

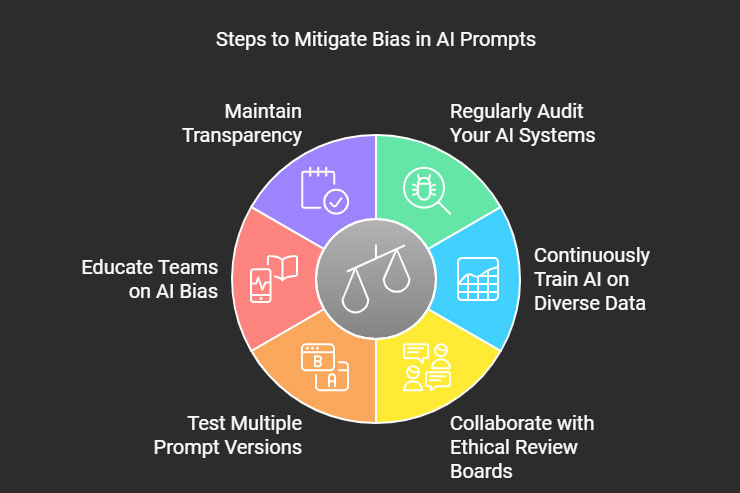

8. What Are the Next Steps to Mitigate Bias in AI Prompts?

Now that you have a deeper understanding of bias in AI prompts, it’s important to take action to create fairer and more inclusive AI systems. Here are the key steps you can take to mitigate bias:

1. Regularly Audit Your AI Systems

Bias can emerge over time as AI systems evolve, so regular audits are essential to ensure fairness. Use tools like IBM’s AI Fairness 360 to assess your models and detect any signs of bias.

2. Continuously Train AI on Diverse Data

Ensure that your AI models are trained on data that represents diverse populations and perspectives. This will reduce the likelihood of perpetuating stereotypes or biases present in historical data.

- Tip: Incorporate data that reflects a wide range of demographics, industries, and cultures.

3. Collaborate with Ethical Review Boards

Work with ethical AI review boards or diverse teams to ensure that your AI systems are being monitored for fairness. These boards can provide feedback and identify biases that may not be immediately obvious to developers.

- Example: Organizations like the Partnership on AI are focused on the ethical development and deployment of AI systems.

- Source: Partnership on AI

4. Test Multiple Prompt Versions

A/B testing multiple versions of prompts can help you detect bias. By comparing the AI’s responses to different phrasings, you can identify which prompts are leading to biased outputs and adjust them accordingly.

- Tip: Always review the responses generated by different prompts to check for patterns of bias.

5. Educate Teams on AI Bias

Ensure that everyone involved in creating or using AI systems is aware of the potential for bias and understands how to mitigate it. Training in ethical AI practices should be a standard part of development and implementation.

- Example: Courses like those offered by AI Ethics Lab provide training and resources on responsible AI development.

- Source: AI Ethics Lab

6. Maintain Transparency

Always be transparent about how your AI models are trained, what data is used, and how decisions are made. This builds trust and allows for accountability when biases are detected.

- Tip: Publish documentation or white papers on the ethical guidelines and safeguards you’ve implemented to mitigate bias.

9. How Can You Use AI Responsibly to Avoid Bias?

Using AI responsibly goes beyond identifying and correcting bias; it involves a commitment to fairness, AI transparency, and accountability throughout the entire AI development lifecycle. Here are some key takeaways for responsible AI use:

1. Prioritize Fairness in AI from the Start

From the moment you start developing AI systems, make fairness a core principle. Ensure that the design process, data selection, and prompt creation are all geared toward minimizing bias.

- Source: AI Now Institute advocates for fairness and accountability in AI research and development.

2. Build Diverse Teams

AI systems reflect the values and perspectives of the people who create them. By involving a diverse group of people in the design and review process, you increase the likelihood of identifying and addressing biases.

- Source: The AI4ALL initiative promotes diversity in AI by providing education and mentorship to underrepresented groups in the field of AI.

- Source: AI4ALL

3. Use Bias Detection Tools Regularly

Incorporate bias detection tools into your workflow to catch any biases early. Tools like Google’s What-If Tool help you explore the impact of different inputs on AI outputs and flag any biased patterns.

- Source: Google’s What-If Tool

4. Hold AI to High Ethical Standards

Responsible AI use means holding yourself accountable for the ethical implications of your AI systems. Whether you’re developing or using AI, ethical reviews, and monitoring should be an ongoing process.

- Tip: Implement policies and guidelines around the ethical use of AI, similar to those promoted by OpenAI, which emphasizes responsible AI development.

- Source: OpenAI’s Charter

5. Encourage User Feedback

Allow users of your AI systems to provide feedback when they encounter biased outputs. This feedback loop can help you continuously improve your models and ensure that they’re producing fair results.

- Tip: Incorporate easy-to-use reporting mechanisms that allow users to flag problematic outputs.

Further readings:

Is it ethical to use generative AI if you can’t tell whether it is right or wrong?

Good AI Is Ethical AI: The Media & Entertainment Industry Has to Check Itself