AI Prompt Learning Center

AI Prompt Evaluation: Techniques for Better Outputs

Artificial intelligence (AI) is transforming industries by enhancing tasks from content creation to data analysis. The effectiveness of AI-generated outputs is directly tied to the quality of the prompts we provide.

As AI becomes integral to daily workflows, crafting effective prompts and evaluating outputs is essential. High-quality prompts yield accurate and relevant results, while poor ones can lead to misleading or harmful outputs.

Mastering prompt evaluation empowers users to maximize AI’s potential, make informed decisions, and stay competitive in an AI-driven world.

What you will learn in the article:

- What are AI prompt fundamentals, and why are they key to generating high-quality outputs?

- How can you craft effective prompts, from ensuring clarity to advanced prompt engineering?

- What methods can you use to evaluate AI outputs, both quantitatively and qualitatively?

- How does the iterative improvement process work, and why is continuous refinement crucial?

- What tools and platforms can assist in the evaluation process?

- How do real-world case studies illustrate the application of these principles?

- What future trends are shaping the landscape of AI evaluation?

1. Understanding AI Prompts

A. Definition and Importance

An AI prompt is a specific instruction guiding an AI system to produce a desired output. It shapes the AI’s responses and directly impacts the quality, relevance, and usefulness of the generated content.

Effective prompts are the cornerstone of successful AI interactions. They can:

- Improve accuracy and relevance of AI outputs

- Enhance the efficiency of AI-assisted tasks

- Minimize the need for multiple iterations or clarifications

- Reduce the risk of misinterpretation or biased results

B. Key Components of Effective Prompts

To create high-quality AI prompts, consider incorporating these essential elements:

- Clarity: Use precise language to convey your intentions unambiguously.

- Specificity: Provide detailed instructions and parameters to guide the AI’s response.

- Context: Offer relevant background information to help the AI understand the broader picture.

- Constraints: Set clear boundaries and limitations for the AI’s output.

- Desired format: Specify the preferred structure or style of the response.

- Examples (when applicable): Provide sample inputs and outputs to illustrate your expectations.

C. Common Pitfalls in Prompt Creation

Avoid these common mistakes to enhance the quality of your AI prompts:

- Ambiguity: Vague or unclear instructions can lead to misinterpretation.

- Overcomplication: Unnecessarily complex prompts may confuse the AI or produce convoluted outputs.

- Lack of context: Failing to provide sufficient background information can result in irrelevant or misaligned responses.

- Bias introduction: Unintentionally incorporating personal biases in prompts can skew AI outputs.

- Ignoring ethical considerations: Failing to account for potential ethical implications of the prompt or expected output.

- Overlooking format specifications: Not clearly defining the desired format or structure of the output.

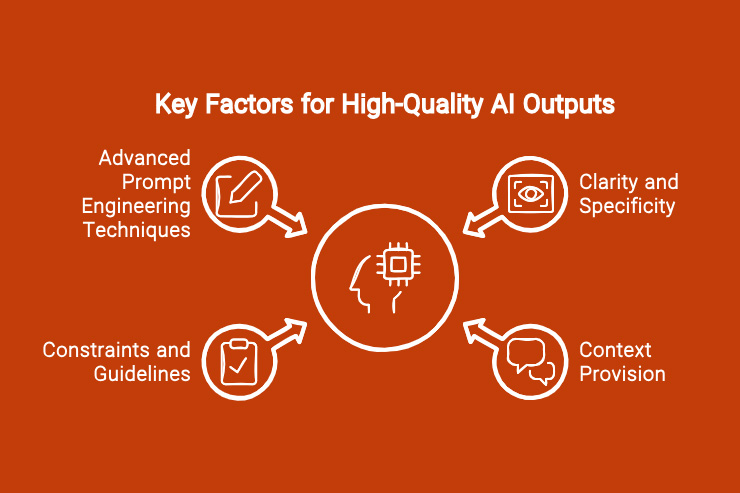

2. Crafting High-Quality Prompts

Mastering the art of crafting high-quality prompts is essential for achieving optimal AI outputs. Let’s explore key techniques and strategies to enhance your prompt engineering skills.

A. Clarity and Specificity

Clear and specific prompts are the foundation of effective AI interactions. To achieve this:

- Use precise language: Avoid ambiguity and clearly state your requirements.

- Break down complex tasks: Divide multi-step processes into smaller, manageable prompts.

- Specify desired output format: Clearly state how you want the information presented (e.g., bullet points, paragraphs, table).

- Quantify when possible: Use numbers or ranges to define expectations (e.g., “Provide 3-5 examples”).

Example: Vague prompt: “Tell me about climate change.” Improved prompt: “Summarize the three main causes of climate change and their potential impacts, providing one scientific study supporting each cause.”

B. Context Provision

Providing context helps the AI understand the broader picture and generate more relevant outputs.

- Offer background information: Briefly explain the situation or problem you’re addressing.

- Define the target audience: Specify who the information is for to adjust tone and complexity.

- State the purpose: Explain why you’re seeking this information or what you plan to do with it.

Example: Basic prompt: “Write a paragraph about renewable energy.” Contextual prompt: “Write a paragraph about renewable energy for a high school science textbook. Focus on how it differs from non-renewable energy sources and its potential to mitigate climate change.”

C. Incorporating Constraints and Guidelines

Setting clear boundaries helps steer the AI towards your desired output.

- Set word or character limits: Specify the desired length of the response.

- Define tone and style: Indicate whether you want formal, casual, technical, or creative language.

- Specify excluded topics: Mention any areas or aspects you don’t want the AI to cover.

- Indicate required elements: List any specific points or data that must be included.

Example: Unconstrained prompt: “Write about the benefits of exercise.” Constrained prompt: “In approximately 150 words, describe three scientifically-proven benefits of regular exercise for mental health. Use a friendly, motivational tone suitable for a general audience blog post.”

D. Prompt Engineering Techniques

Advanced prompt engineering techniques can significantly enhance the quality and relevance of AI outputs.

Zero-shot prompting:

- Definition: Asking the AI to perform a task without providing examples.

- Best for: Simple tasks or when working with advanced language models.

- Example: “Explain the concept of photosynthesis in simple terms.”

Few-shot prompting:

- Definition: Providing a few examples to guide the AI’s response.

- Best for: Complex tasks or when seeking specific formats/styles.

- Example: “Translate the following English phrases to French: Hello -> Bonjour Good night -> Bonne nuit How are you? -> “

Chain-of-thought prompting:

- Definition: Guiding the AI through a step-by-step reasoning process.

- Best for: Complex problem-solving or when explanation of reasoning is needed.

- Example: “Solve this math problem step-by-step: If a train travels 120 km in 2 hours, what is its average speed in km/h? Step 1: Identify the given information Step 2: Recall the formula for average speed Step 3: …”

3. Comparing Different AI Prompts Evaluation Techniques

| Technique | Pros | Cons | Best Use Cases |

| Human Review | – Provides subjective, qualitative feedback

– Assesses relevance, coherence, and appropriateness – Evaluates clarity, accuracy, and naturalness of language |

– Can be time-consuming and expensive

– Introduces human bias – Lacks quantitative metrics |

– Evaluating prompts for sensitive or high-stakes applications

– Assessing prompts for user engagement and helpfulness |

| Subjective Evaluation | – Gathers direct user feedback and impressions

– Provides qualitative insights on prompt quality – Helps identify areas for improvement |

– Relies on subjective opinions

– Lacks standardized evaluation criteria – Results can vary based on user demographics |

– Evaluating prompts for specific user groups

– Gathering initial feedback on prompt quality |

| Comparative Analysis | – Compares generated outputs to expectations or other sources

– Evaluates how well prompts address queries and provide relevant information – Assesses overall quality and usefulness of outputs |

– Requires defining clear evaluation criteria

– Can be time-consuming to compare multiple prompts – Relies on subjective judgments |

– Comparing prompts for similar queries or use cases

– Evaluating prompts for specific information retrieval tasks |

| Metrics Analysis | – Provides quantitative metrics to measure prompt quality

– Enables tracking improvements over time – Can be automated using tools or algorithms |

– Requires defining appropriate metrics

– May not capture all aspects of prompt quality – Lacks qualitative insights |

– Evaluating prompts for consistency and correctness

– Tracking prompt quality improvements over time |

| User Feedback Surveys | – Gathers quantitative data on user satisfaction

– Provides numerical ratings and specific feedback – Enables gathering feedback from a larger user base |

– Requires designing effective survey questions

– Relies on user participation and response rates – May not provide in-depth qualitative insights |

– Evaluating prompts for user satisfaction and usability

– Gathering feedback from a wide range of users |

| Task Completion Rates | – Measures the effectiveness of prompts in assisting users

– Provides quantitative metrics like task completion time and accuracy – Helps identify prompts that are most effective in achieving user goals |

– May not capture all aspects of prompt quality

– Requires defining clear tasks and success criteria – Can be challenging to isolate the impact of prompts |

– Evaluating prompts for specific user tasks or workflows

– Comparing prompts for their ability to assist users in achievi |

4. Evaluating AI Outputs

Once you’ve crafted an effective prompt and received an AI-generated output, the next crucial step is evaluation. Thorough assessment ensures the quality, reliability, and usefulness of the AI’s response.

A. Relevance to the Original Prompt

Assess how well the AI’s output aligns with your initial request:

- Compare the response to each component of your prompt.

- Check if all specified requirements were addressed.

- Evaluate whether the AI stayed on topic or veered into unrelated areas.

B. Accuracy and Factual Correctness

Verify the reliability of the information provided:

- Cross-reference key facts with reputable sources.

- Look for internal consistency within the response.

- Be wary of confident-sounding but incorrect statements.

- Check dates, statistics, and quoted information for accuracy.

C. Coherence and Logical Flow

Examine the structure and organization of the AI’s response:

- Ensure ideas are presented in a logical sequence.

- Look for smooth transitions between concepts.

- Check if the conclusion (if applicable) follows logically from the presented information.

- Assess whether the response maintains a consistent level of detail throughout.

D. Creativity and Originality (When Applicable)

For tasks requiring creative outputs, evaluate the AI’s innovative approach:

- Look for unique perspectives or novel combinations of ideas.

- Assess whether the output goes beyond mere recombination of existing information.

- Consider if the response offers unexpected but valuable insights.

- Evaluate the balance between creativity and adherence to the prompt’s requirements.

E. Ethical Considerations and Bias Detection

Critically examine the output for potential ethical issues or biases:

- Look for stereotyping or unfair representation of groups.

- Assess whether the response promotes harmful or discriminatory views.

- Check for balance in perspectives, especially on controversial topics.

- Consider the potential real-world impact if this information were to be used or published.

- Evaluate the use of gendered language and ensure it’s appropriate for the context.

“Those who know how to prompt the AI to do their bidding, and to do it faster and better and more creatively than anyone else, is going to be golden.” – Nikhil Dey, AI expert and entrepreneur

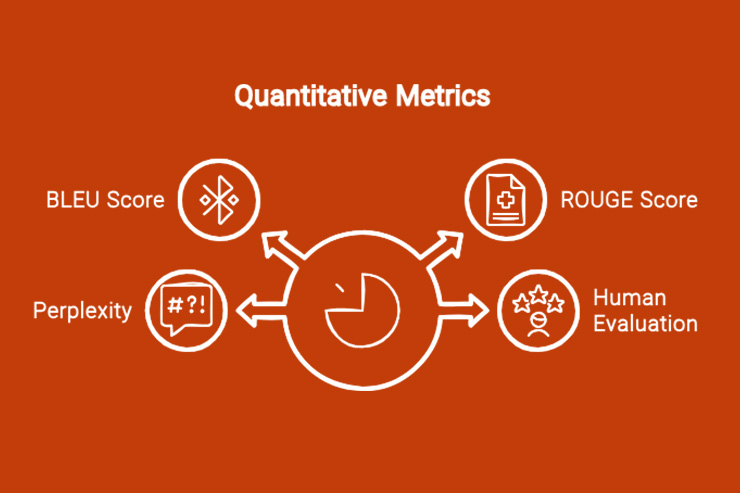

5. Quantitative Metrics for Output Evaluation

While qualitative assessment is crucial, quantitative metrics provide objective measures to evaluate AI outputs. These metrics are particularly useful for comparing different models or tracking improvements over time.

A. BLEU Score for Translation Tasks

BLEU (Bilingual Evaluation Understudy) is a widely used metric for evaluating machine translations.

- Purpose: Measures how similar the machine-translated text is to a reference human translation.

- Scale: 0 to 1, where 1 indicates a perfect match.

- Interpretation: Higher scores suggest better translations, but context is crucial.

- Limitations: Focuses on precision and doesn’t account for all aspects of translation quality.

Example: A BLEU score of 0.7 for an English to French translation indicates high similarity to human reference translations, but may still contain minor errors or awkward phrasing.

B. ROUGE Score for Summarization

ROUGE (Recall-Oriented Understudy for Gisting Evaluation) is commonly used to evaluate automatic summarization and machine translation.

- Purpose: Compares AI-generated summaries to human-created reference summaries.

- Types: ROUGE-N (n-gram overlap), ROUGE-L (longest common subsequence), ROUGE-S (skip-gram concurrence).

- Scale: 0 to 1, where 1 indicates a perfect match.

- Interpretation: Higher scores suggest better summarization, but multiple ROUGE metrics should be considered together.

Example: A ROUGE-1 score of 0.6 and a ROUGE-L score of 0.5 for a news article summary indicate good unigram overlap and reasonable sequential match with reference summaries.

C. Perplexity for Language Models

Perplexity measures how well a probability model predicts a sample, often used to evaluate language models.

- Purpose: Assesses the model’s ability to predict text.

- Interpretation: Lower perplexity indicates better prediction (less “surprised” by the text).

- Usage: Comparing models or evaluating a model’s performance on different types of text.

- Limitations: Doesn’t directly measure the quality or coherence of generated text.

Example: A GPT model with a perplexity of 20 on a test set is likely to generate more fluent and contextually appropriate text than one with a perplexity of 50.

D. Human Evaluation Scales (e.g., Likert Scale)

While not strictly a quantitative metric, human evaluation using standardized scales can provide numerical data for analysis.

- Purpose: Captures subjective aspects of quality that automated metrics might miss.

- Common scales:

- Likert scale (e.g., 1-5 or 1-7 rating)

- Semantic differential scale (e.g., pairs of opposing adjectives)

- Aspects to evaluate: Fluency, coherence, relevance, creativity, etc.

- Analysis: Calculate average scores, compare across different prompts or models.

Example: On a 5-point Likert scale for “coherence,” an average score of 4.2 across 100 human raters suggests the AI output is generally well-structured and logical.

When using these quantitative metrics, it’s important to:

- Choose metrics appropriate for your specific task and goals.

- Use multiple metrics for a more comprehensive evaluation.

- Consider the limitations and potential biases of each metric.

- Combine quantitative metrics with qualitative assessments for a holistic evaluation.

6. Qualitative Assessment Techniques

While quantitative metrics provide objective measures, qualitative assessment techniques offer deeper insights into the nuances of AI-generated content. These methods help evaluate aspects that are difficult to quantify, such as creativity, appropriateness, and overall quality.

A. Expert Review

Leveraging the knowledge and experience of subject matter experts can provide valuable insights into the quality and accuracy of AI outputs.

Process:

- Identify relevant experts in the field.

- Develop a structured review format (e.g., questionnaire, rubric).

- Have experts assess the AI output based on predefined criteria.

- Analyze feedback and identify common themes or concerns.

Benefits:

- Deep, nuanced evaluation of content accuracy and quality.

- Identification of subtle errors or misrepresentations.

- Insights into industry-specific standards and expectations.

Considerations:

- Can be time-consuming and costly.

- Potential for subjective bias based on individual expert opinions.

Example: For a medical AI assistant, having a panel of physicians review its responses to patient queries can ensure accuracy and adherence to best practices in patient communication.

B. User Feedback Collection

Gathering feedback from end-users provides insights into the practical effectiveness and user satisfaction of AI outputs.

Methods:

- Surveys (e.g., post-interaction questionnaires)

- Interviews (structured or semi-structured)

- Focus groups

- In-app feedback mechanisms (e.g., thumbs up/down buttons)

Aspects to evaluate:

- Usefulness of the AI output

- Clarity and understandability

- User satisfaction

- Areas for improvement

Analysis techniques:

- Thematic analysis of open-ended responses

- Statistical analysis of quantitative feedback

- Sentiment analysis of user comments

Example: For an AI-powered customer service chatbot, analyzing user feedback can reveal common pain points, frequently asked questions that the AI struggles with, and overall user satisfaction levels.

C. A/B Testing of Different Outputs

Comparing multiple AI-generated outputs helps identify the most effective approaches and refine prompt engineering techniques.

Process:

- Generate multiple outputs using different prompts or models.

- Randomly present these variations to users or evaluators.

- Collect feedback or measure performance metrics for each variant.

- Analyze results to determine which version performs best.

Applications:

- Comparing different prompt formulations

- Evaluating the effectiveness of various AI models

- Testing the impact of different content styles or formats

Example: When developing an AI writing assistant, A/B testing different prompt structures for generating article introductions can help identify which approach consistently produces more engaging and effective openings.

D. Comparative Analysis with Human-Generated Content

Benchmarking AI outputs against human-created content provides a baseline for quality and helps identify areas where AI excels or needs improvement.

Process:

- Collect both AI-generated and human-created content for the same task.

- Develop a set of evaluation criteria (e.g., accuracy, creativity, structure).

- Have independent evaluators assess both sets of content without knowing the source.

- Compare ratings and analyze differences between AI and human-generated content.

Benefits:

- Identifies strengths and weaknesses of AI compared to human performance.

- Helps set realistic expectations for AI capabilities.

- Guides refinement of AI models and prompts.

Considerations:

- Ensure a fair comparison by matching the skill level of human content creators to the task complexity.

- Be aware of potential biases in human evaluators.

Example: For a content marketing AI, comparing its product descriptions against those written by experienced copywriters can highlight areas where the AI needs improvement in

Tip: Combine qualitative assessments with quantitative metrics to gain a holistic understanding of your AI system’s performance, ensuring optimization for both measurable outcomes and real-world effectiveness.

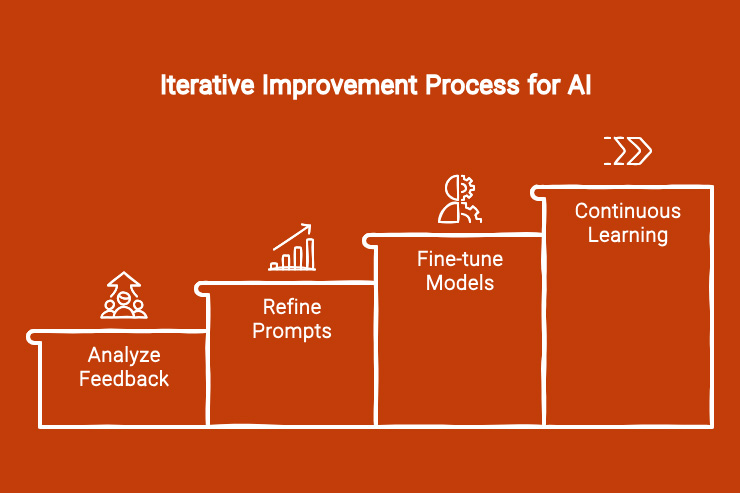

7. Iterative Improvement Process

The evaluation of AI prompts and outputs is not a one-time task but an ongoing process of refinement and optimization. This iterative approach ensures that your AI system continues to improve over time, adapting to new challenges and user needs.

A. Analyzing Feedback and Metrics

The first step in the iterative improvement process is to thoroughly analyze the data collected through various evaluation methods.

- Aggregate quantitative metrics: Compile scores from methods like BLEU, ROUGE, and perplexity measurements.

- Synthesize qualitative feedback: Identify common themes and insights from expert reviews, user feedback, and comparative analyses.

- Look for patterns: Identify consistent strengths or weaknesses in AI outputs across different prompts or use cases.

- Prioritize issues: Rank problems based on their impact on output quality and user satisfaction.

Example: If BLEU scores for translations are consistently low for idiomatic expressions, and user feedback highlights confusion with colloquialisms, improving the handling of non-literal language could be a priority.

B. Refining Prompts Based on Output Quality

Use the insights gained from your analysis to refine your prompts and improve output quality.

- Identify prompt weaknesses: Determine which aspects of your prompts are leading to suboptimal outputs.

- Experiment with prompt structures: Try different phrasings, levels of detail, or prompt engineering techniques.

- Add or refine constraints: Incorporate more specific guidelines or examples in your prompts to address identified issues.

- Test variations: Use A/B testing to compare the performance of refined prompts against original versions.

Example: If an AI writing assistant struggles with maintaining consistent tone, you might refine the prompt to include more explicit tone guidelines and examples of the desired style.

C. Fine-tuning AI Models (If Applicable)

For organizations with access to the underlying AI models, fine-tuning can be a powerful way to improve performance.

- Prepare a dataset: Compile a set of high-quality prompt-output pairs that represent ideal performance.

- Choose fine-tuning parameters: Decide on learning rate, number of epochs, and other hyperparameters.

- Perform fine-tuning: Use the prepared dataset to adjust the model’s weights.

- Evaluate performance: Compare the fine-tuned model’s outputs to the original model using your established evaluation metrics.

- Iterate: If necessary, adjust your fine-tuning approach and repeat the process.

Example: A company using GPT-3 for customer service might fine-tune the model on a dataset of exemplary customer interactions to improve its performance in that specific domain.

D. Continuous Learning and Adaptation

The AI landscape is constantly evolving, as are user needs and expectations. Establish processes for ongoing improvement and adaptation.

- Regular evaluation cycles: Set up a schedule for periodic re-evaluation of your AI system’s performance.

- Stay informed: Keep up with the latest developments in AI research and prompt engineering techniques.

- Monitor user behavior: Track changes in how users interact with your AI system over time.

- Adapt to feedback: Continuously incorporate user feedback and expert insights into your improvement process.

- Anticipate changes: Try to predict future needs or challenges and proactively adjust your AI system.

Example: An AI-powered news summarization tool might need to adapt its prompts and evaluation criteria as new types of media (e.g., interactive articles, VR news experiences) become more common.

8. Tools and Platforms for AI Evaluation

As the field of AI evolves, so do the tools and platforms designed to assist in evaluating and improving AI systems. Here are some notable options:

A. OpenAI’s Evaluation Tools

GPT-3 Playground:

- Purpose: Interactive environment for testing and refining prompts.

Features:

- Real-time output generation

- Adjustable parameters (temperature, top-p, etc.)

- Ability to save and share prompts

OpenAI API:

- Purpose: Programmatic access to AI models for large-scale evaluation and integration.

Features:

- Supports various models (GPT-3, DALL-E, etc.)

- Customizable request parameters

- Usage tracking and analytics

B. Hugging Face’s Model Evaluation Features

Model Hub:

- Purpose: Repository of pre-trained models with built-in evaluation features.

Features:

- Community-driven model sharing and evaluation

- Integrated metrics for various NLP tasks

- Easy-to-use interfaces for model testing

Datasets:

- Purpose: Collection of datasets for training and evaluating models.

Features:

- Wide range of datasets for different tasks

- Standardized formats for easy integration

- Tools for dataset manipulation and analysis

Evaluate Library:

- Purpose: Streamlined evaluation of machine learning models.

Features:

- Support for various NLP metrics (BLEU, ROUGE, etc.)

- Easy integration with Hugging Face models and datasets

- Customizable evaluation pipelines

C. Custom Evaluation Pipelines

For organizations with specific needs, custom evaluation pipelines offer maximum flexibility and control.

Components might include:

- Data ingestion and preprocessing tools

- Model integration interfaces

- Metric calculation modules

- Visualization and reporting systems

- Feedback collection and analysis tools

Benefits:

- Tailored to specific use cases and requirements

- Integration with existing systems and workflows

- Proprietary evaluation metrics or methodologies

- Enhanced data security and privacy controls

When choosing tools and platforms, consider factors such as compatibility, scalability, ease of use, cost, community support, and customization options.

9. Case Studies

Let’s explore three diverse case studies that highlight different aspects of AI prompt optimization and output evaluation.

A. Successful Prompt Optimization in Customer Service Chatbots

Company: GlobalTech Solutions Challenge: Improving the accuracy and helpfulness of AI-powered customer service chatbot responses.

Approach:

- Analyzed customer interactions to identify common issues and pain points.

- Developed targeted prompts for different customer service scenarios.

- Implemented A/B testing to compare different prompt formulations.

- Used BLEU scores and customer satisfaction ratings for evaluation.

- Iteratively refined prompts based on performance metrics and customer feedback.

Results:

- 30% increase in first-contact resolution rate

- 25% reduction in escalation to human agents

- 15% improvement in customer satisfaction scores

Key Takeaway: Tailoring prompts to specific scenarios and continuously refining them based on quantitative metrics and qualitative feedback can significantly enhance chatbot performance.

B. Output Quality Improvement in Content Generation

Organization: EduLearn Platform Challenge: Enhancing the quality and educational value of AI-generated study materials.

Approach:

- Collaborated with subject matter experts to define quality criteria.

- Developed a comprehensive prompt template incorporating learning objectives, target audience, and desired content structure.

- Implemented a multi-stage evaluation process:

- Automated checks for factual accuracy and plagiarism

- Expert review for educational value and clarity

- Student feedback on usefulness and engagement

- Used machine learning to analyze successful content and refine prompt generation.

Results:

- 40% increase in student engagement with AI-generated materials

- 35% improvement in learning outcomes as measured by quiz performance

- 50% reduction in content revision cycles

Key Takeaway: Combining domain expertise with a multi-faceted evaluation approach can lead to significant improvements in AI-generated educational content.

C. Ethical Considerations in AI-Generated Search Results

Company: TruthSeek Search Engine Challenge: Ensuring fairness and reducing bias in AI-generated search result snippets.

Approach:

- Formed an ethics board to define fairness criteria.

- Developed prompts that explicitly instructed the AI to consider multiple perspectives and avoid biased language.

- Implemented a bias detection system using NLP techniques.

- Conducted regular audits of search results for sensitive topics.

- Created a user feedback mechanism to report biased or unfair results.

Results:

- 60% reduction in user-reported bias incidents

- 45% improvement in diversity of perspectives presented for controversial topics

- 25% rise in daily active users

Key Takeaway: Proactively addressing ethical considerations in AI prompt design and implementing robust evaluation systems can enhance the fairness and trustworthiness of AI-generated content.

10. Future Trends in AI Prompt and Output Evaluation

As AI technology continues to evolve, so too will the methods and tools for evaluating prompts and outputs.

A. Automated Evaluation Systems

Future AI systems may incorporate built-in evaluation mechanisms, continuously assessing their own outputs and adjusting prompts in real-time.

Potential developments:

- Self-critique AI models that can evaluate their own outputs

- Automated prompt optimization systems using reinforcement learning

- Integration of human feedback loops into automated systems for continuous improvement

B. Integration of Multi-Modal Evaluations

As AI systems become more versatile, evaluation methods will need to account for multiple modalities of input and output.

Emerging areas:

- Evaluation frameworks for text-to-image or image-to-text AI models

- Metrics for assessing the coherence between text, image, and audio in multi-modal AI outputs

- Holistic evaluation approaches that consider the interplay between different modalities

C. Advancements in Contextual Understanding

Future evaluation methods will likely place greater emphasis on assessing an AI’s understanding of context and nuance.

Potential focus areas:

- Metrics for evaluating cultural sensitivity and contextual appropriateness

- Assessment of AI’s ability to understand and generate humor, sarcasm, and subtle emotional cues

- Evaluation of long-term coherence and context retention in extended AI interactions

Resources

Books:

- “Designing and Evaluating Prompts for Large Language Models” by Emily M. Bender and Alvin Grissom II

- “Natural Language Processing with Transformers” by Lewis Tunstall, Leandro von Werra, and Thomas Wolf

- “AI Ethics” by Mark Coeckelbergh

Research Papers:

- “Language Models are Few-Shot Learners” by Brown et al. (2020)

- “Evaluating Large Language Models Trained on Code” by Chen et al. (2021)

- “On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?” by Bender et al. (2021)

AI Communities and Forums for Further Learning:

- AI Stack Exchange

- r/MachineLearning on Reddit

- Hugging Face Forums

- OpenAI Community Forum

- AI Ethics Discussion Group on LinkedIn